Clojure Tools for Symbolic Artificial Intelligence

EuroClojure 2017 · S. Lynch & S. Johnson · University of Teesside

📄 Get the Paper · 📊 Get the Slides · 📽️ Go to the Video

In Short

This paper is a practical workshop guide to building AI planning and reasoning systems using Clojure — a functional programming language — without relying on any machine learning whatsoever. Instead, it draws on a much older tradition in AI: the idea that intelligence can be encoded as explicit rules, logical relationships, and structured search. The paper walks through how to represent knowledge as facts, write rules that infer new facts from existing ones, and build planning systems that can figure out a sequence of actions to reach a goal — think of it as teaching a program to reason step-by-step through a problem the way a human might, rather than training it on data until it guesses correctly.

What it contributes is accessibility. These techniques — forward chaining, backward chaining, operator-based planning — existed long before this paper, but implementing them cleanly in a modern functional language like Clojure, with concise and readable code, lowers the barrier for developers who want to build explainable, rule-driven AI systems without the complexity of a full machine learning stack.

The Breakdown

Problem

Most developers approaching AI for the first time encounter it through the lens of machine learning: neural networks, training data, probabilistic outputs. But there's an entire class of problems — planning, logical inference, constraint satisfaction — where that paradigm is a poor fit. Before this paper, developers working in Clojure had few accessible, practical resources for building these kinds of systems. The knowledge existed in academic literature, but the bridge to practical implementation was largely missing.

Approach

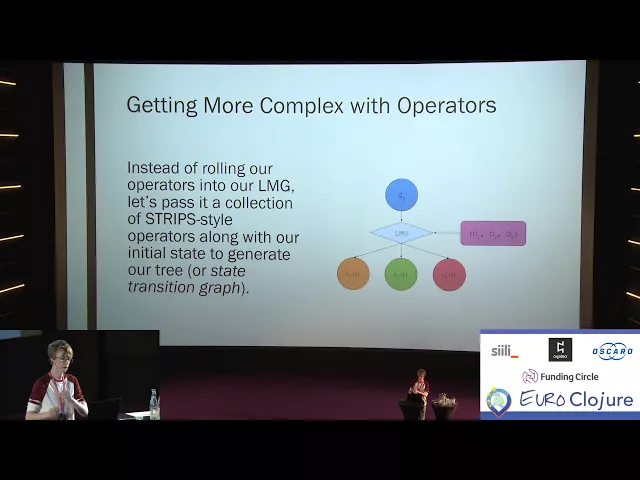

The paper works through a progressive series of hands-on implementations entirely in Clojure, using a symbolic pattern matching library as its primary tool. It begins with simple search problems — finding a path between states — and builds up through tuple-based fact representation, rule compilation, forward and backward chaining inference engines, and finally STRIPS-style planning operators: a formalism borrowed from classical AI that describes actions in terms of their preconditions and effects. Everything is shown with working code and concrete examples, from routing flights to planning an agent picking up and moving objects across a room.

Key Findings

The central finding is that Clojure is a surprisingly natural fit for symbolic AI. Its functional style, immutable data structures, and macro system allow inference engines and planners to be written in remarkably few lines of clean, readable code. Forward chaining — exhaustively applying rules until no new facts can be derived — and backward chaining — working back from a goal to check whether it can be proven — can both be expressed concisely and composed with planning search mechanisms. Crucially, the resulting systems are fully transparent: every inference step can be inspected, traced, and understood.

Real-World Implications

At the time of publication, the most immediate application was in any domain requiring structured, explainable decision-making: robotics, game AI, workflow automation, and diagnostic systems. The STRIPS-style planner demonstrated in the paper — given a start state, a goal, and a set of operators, find me the sequence of actions to get there — maps directly onto real engineering problems. More broadly, the paper validated that developers don't always need a machine learning model to build intelligent behaviour into a system; sometimes a well-designed rule engine is faster, cheaper, more auditable, and more reliable.

So, What?

Here's the thing about a 2017 paper on symbolic AI: in 2026, it looks more relevant than it did in 2019.

In the years immediately following this work, the field was swept up in the transformer revolution. "Attention Is All You Need" landed in 2017, and within a few years it felt like neural networks had made classical AI methods obsolete. Why hand-craft rules when a large language model could just learn the patterns?

We now know that's an incomplete picture. LLMs hallucinate. They can't reliably plan. They struggle with formal logical reasoning and produce outputs that are difficult to audit or explain — a serious problem in regulated industries, safety-critical systems, and, critically, cybersecurity. What the field has rediscovered is that hybrid systems — ones that combine the language understanding of neural models with the structured reasoning of classical methods — outperform pure neural approaches on planning and inference tasks. This is exactly the space that systems like OpenAI's o-series models, and research into neuro-symbolic AI, are now exploring.

The techniques in this paper sit right at the heart of that conversation. Rule-based inference engines and operator planners are precisely the kind of structured reasoning layer that modern AI systems are being augmented with. In offensive security specifically, automated planning — figuring out the sequence of steps to move from initial access to a target — is one of the hardest open problems in AI-driven penetration testing. STRIPS-style formalism has been directly applied in academic research on automated attack graph traversal and exploit chaining.

The takeaway isn't that Clojure or this specific toolkit are the future. It's that the ideas here — explicit knowledge representation, auditable inference, goal-directed planning — are foundational primitives that keep resurfacing because they solve problems that statistical models fundamentally cannot. Understanding them deeply, as this work demonstrates, is an asset that compounds.

Author's note: These publication summaries are AI-assisted. I use AI to present my work in a consistent, accessible way — the research and writing behind each publication is entirely my own.